Python 官方文档:入门教程 => 点击学习

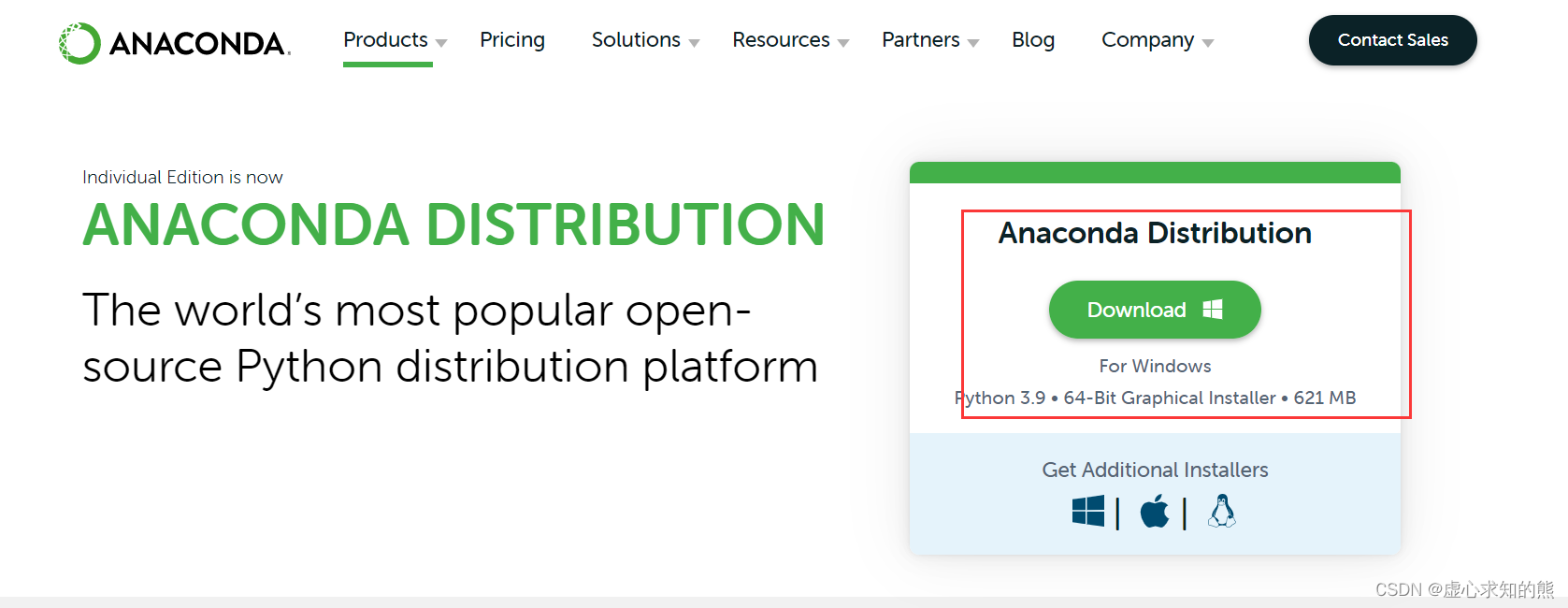

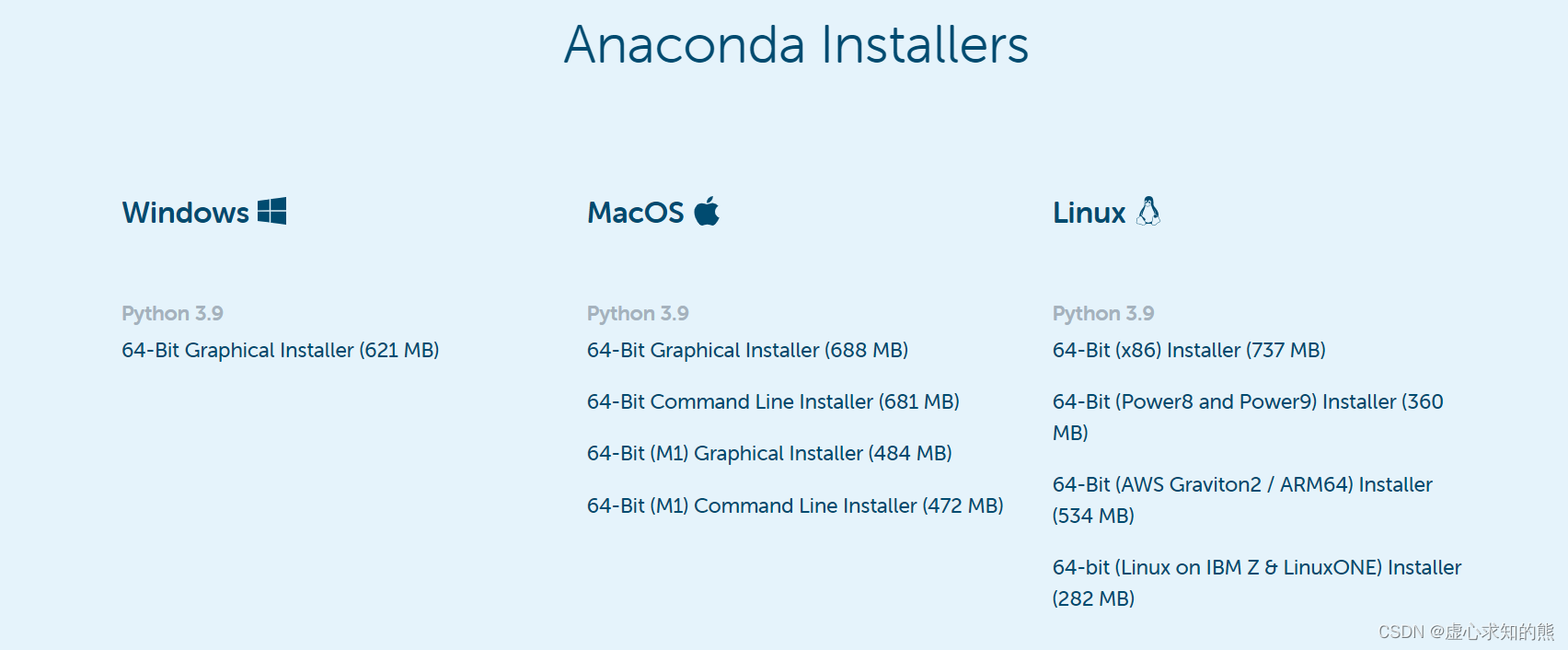

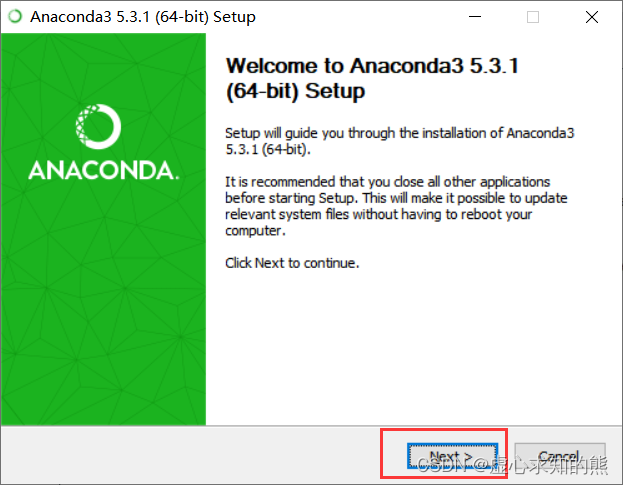

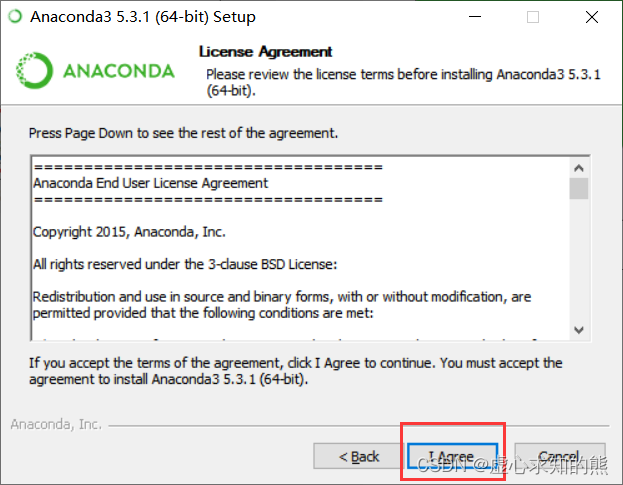

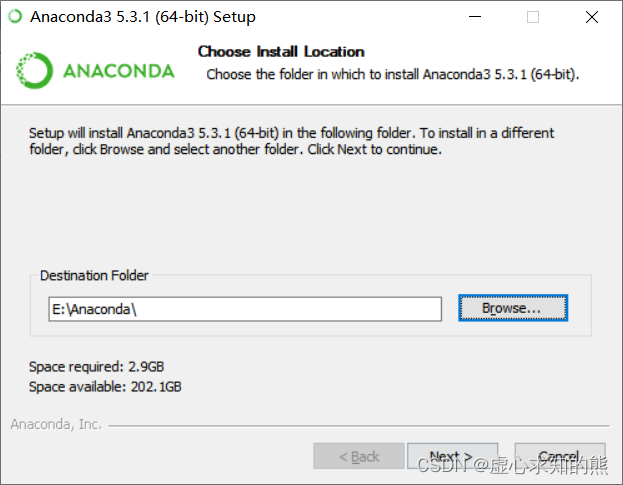

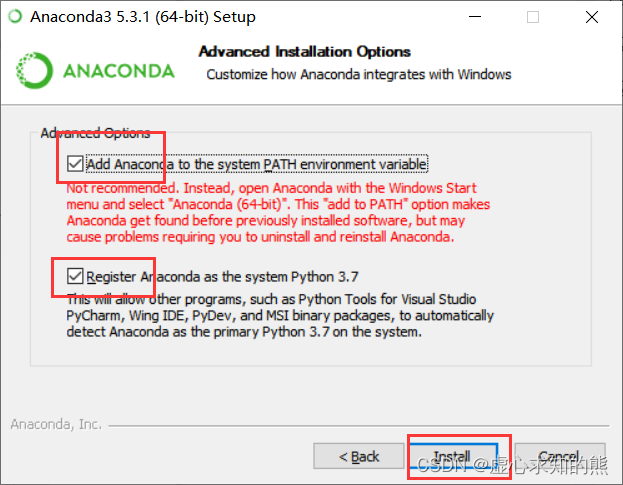

文章目录 一、PyTorch 简介二、PyTorch 软件框架1. Anaconda 下载2. Anaconda 安装3. Anaconda Navigator 打不开问题(不适用所有)4.

本文参加新星计划人工智能(PyTorch)赛道:https://bbs.csdn.net/topics/613989052

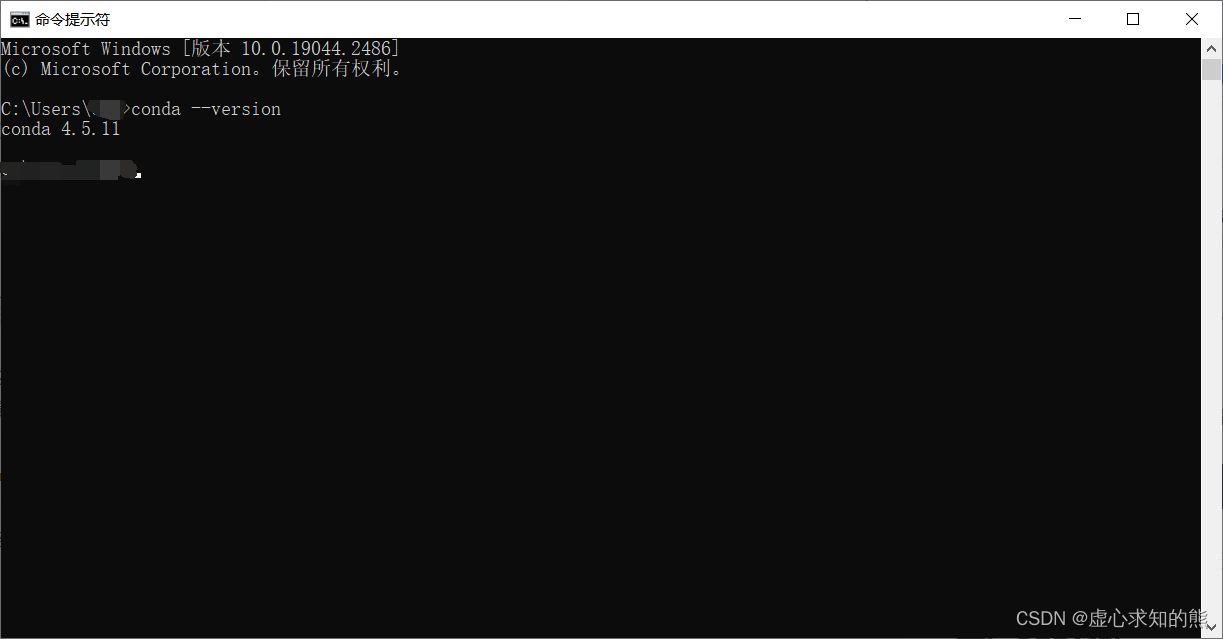

conda --version

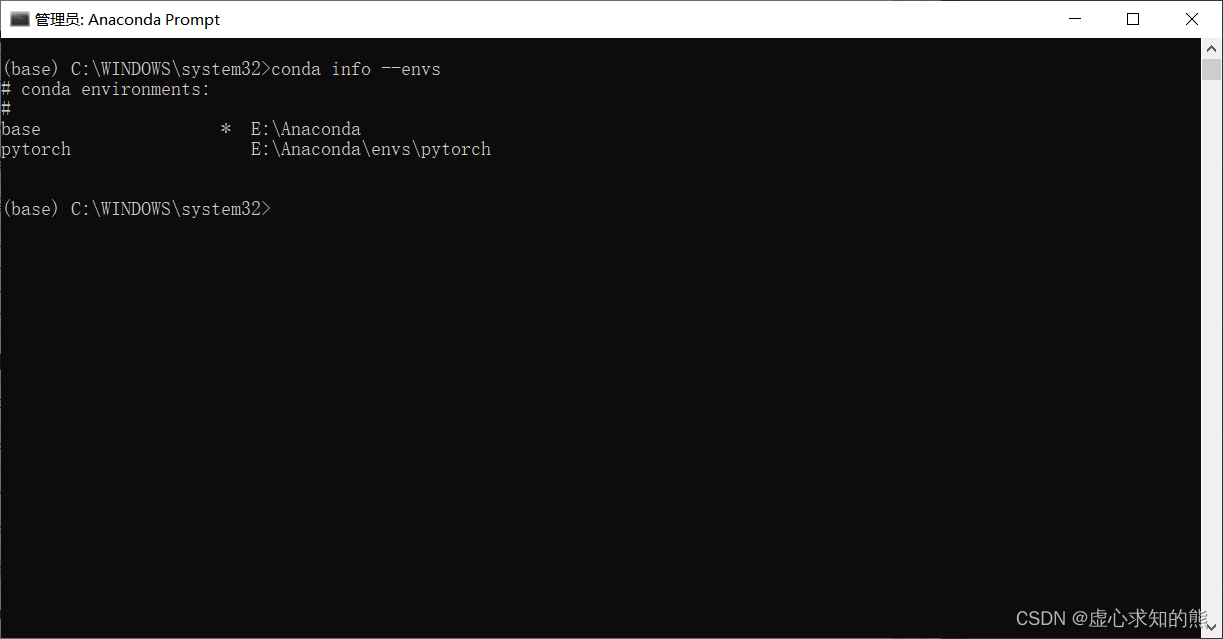

conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/conda config --set show_channel_urls yesconda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/pytorch/conda create -n pytorch python=3.7conda info --envs

activate pytorch

pip3 install torch torchvision torchaudio

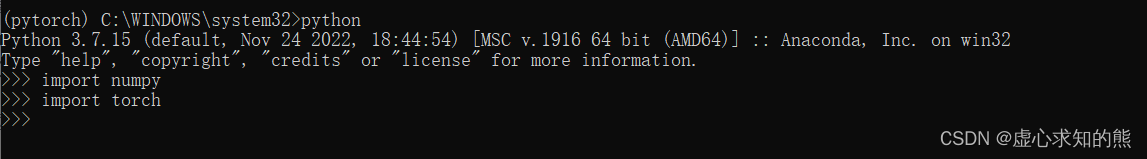

conda activate pytorch #pytorch3.8 是之前建立的环境名称,可修改为自己建立名称

conda install nb_condajupyter notbook

torch.__version__ 查看自己的 PyTorch 版本,我的是 CPU 版本的 1.13.1,示例如下:import torchtorch.__version__#'1.13.1+cpu'torch.empty() 生成一个矩阵,但未初始化。x = torch.empty(5, 3)x#tensor([[8.9082e-39, 9.9184e-39, 8.4490e-39],# [9.6429e-39, 1.0653e-38, 1.0469e-38],# [4.2246e-39, 1.0378e-38, 9.6429e-39],# [9.2755e-39, 9.7346e-39, 1.0745e-38],# [1.0102e-38, 9.9184e-39, 6.2342e-19]])torch.rand() 生成一个随机值的矩阵。x = torch.rand(5, 3)x#tensor([[0.1452, 0.4816, 0.4507],# [0.1991, 0.1799, 0.5055],# [0.6840, 0.6698, 0.3320],# [0.5095, 0.7218, 0.6996],# [0.2091, 0.1717, 0.0504]])torch.zeros() 生成一个全零矩阵。x = torch.zeros(5, 3, dtype=torch.long)x#tensor([[0, 0, 0],# [0, 0, 0],# [0, 0, 0],# [0, 0, 0],# [0, 0, 0]])x = torch.tensor([5.5, 3])x#tensor([5.5000, 3.0000])size() 查看矩阵的大小,也就是矩阵有几行几列。x.size()#torch.Size([5, 3])view() 操作改变矩阵维度。x = torch.randn(4, 4)y = x.view(16)z = x.view(-1, 8) print(x.size(), y.size(), z.size())#torch.Size([4, 4]) torch.Size([16]) torch.Size([2, 8])import numpy as npa = torch.ones(5)b = a.numpy()b#array([1., 1., 1., 1., 1.], dtype=float32)import numpy as npa = np.ones(5)b = torch.from_numpy(a)b#tensor([1., 1., 1., 1., 1.], dtype=torch.float64)import torchfrom torch import tensor

tensor() 生成一个数。x = tensor(42.)x#tensor(42.)dim() 查看他的维度。x.dim()#0item() 将张量转变为元素。x.item()#42.0[-5., 2., 0.],在深度学习中通常指特征,例如词向量特征,某一维度特征等v = tensor([1.5, -0.5, 3.0])v#tensor([ 1.5000, -0.5000, 3.0000])v.dim()#1v.size()#torch.Size([3])M = tensor([[1., 2.], [3., 4.]])M#tensor([[1., 2.],# [3., 4.]])matmul() 进行矩阵的乘法运算。M.matmul(M)#tensor([[ 7., 10.],# [15., 22.]])M * M#tensor([[ 1., 4.],# [ 9., 16.]])x = torch.randn(3,4,requires_grad=True)x#tensor([[-0.4847, 0.7512, -1.0082, 2.2007],# [ 1.0067, 0.3669, -1.5128, -1.3823],# [ 0.8001, -1.6713, 0.0755, 0.9826]], requires_grad=True)x = torch.randn(3,4)x.requires_grad=Truex#tensor([[ 0.6438, 0.4278, 0.8278, -0.1493],# [-0.8396, 1.3533, 0.6111, 1.8616],# [-1.0954, 1.8096, 1.3869, -1.7984]], requires_grad=True)b = torch.randn(3,4,requires_grad=True)t = x + by = t.sum()y#tensor(7.9532, grad_fn=) y.backward()b.grad#tensor([[1., 1., 1., 1.],# [1., 1., 1., 1.],# [1., 1., 1., 1.]])x.requires_grad, b.requires_grad, t.requires_grad#(True, True, True)

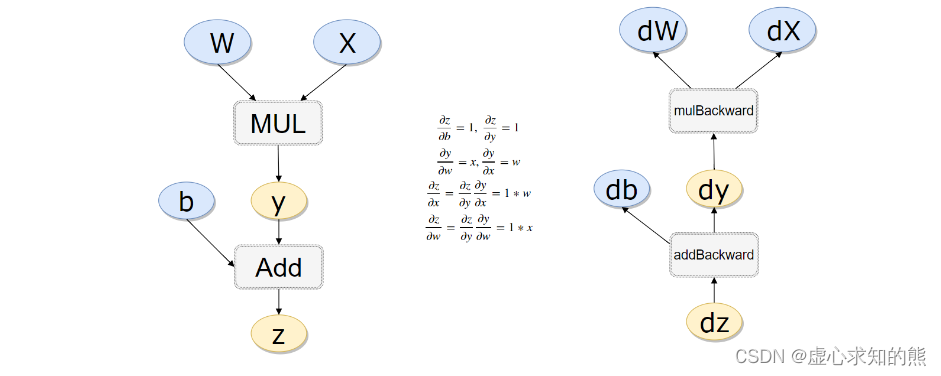

x = torch.rand(1)b = torch.rand(1, requires_grad = True)w = torch.rand(1, requires_grad = True)y = w * x z = y + b x.requires_grad, b.requires_grad, w.requires_grad, y.requires_grad#注意y也是需要的#(False, True, True, True)x.is_leaf, w.is_leaf, b.is_leaf, y.is_leaf, z.is_leaf#(True, True, True, False, False)z.backward(retain_graph=True)#如果不清空会累加起来w.grad#tensor([0.7954])b.grad#tensor([1.])x_values = [i for i in range(11)]x_train = np.array(x_values, dtype=np.float32)x_train = x_train.reshape(-1, 1)x_train.shape#(11, 1)y_values = [2*i + 1 for i in x_values]y_train = np.array(y_values, dtype=np.float32)y_train = y_train.reshape(-1, 1)y_train.shape#(11, 1)import torchimport torch.nn as nnclass LinearRegressionModel(nn.Module): def __init__(self, input_dim, output_dim): super(LinearRegressionModel, self).__init__() self.linear = nn.Linear(input_dim, output_dim) def forward(self, x): out = self.linear(x) return outinput_dim = 1output_dim = 1model = LinearRegressionModel(input_dim, output_dim)model#LinearRegressionModel(# (linear): Linear(in_features=1, out_features=1, bias=True)#)epochs = 1000learning_rate = 0.01optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)criterion = nn.MSELoss()for epoch in range(epochs): epoch += 1 # 注意转行成tensor inputs = torch.from_numpy(x_train) labels = torch.from_numpy(y_train) # 梯度要清零每一次迭代 optimizer.zero_grad() # 前向传播 outputs = model(inputs) # 计算损失 loss = criterion(outputs, labels) # 返向传播 loss.backward() # 更新权重参数 optimizer.step() if epoch % 50 == 0: print('epoch {}, loss {}'.fORMat(epoch, loss.item()))#epoch 50, loss 0.22157077491283417#epoch 100, loss 0.12637567520141602#epoch 150, loss 0.07208002358675003#epoch 200, loss 0.04111171141266823#epoch 250, loss 0.023448562249541283#epoch 300, loss 0.01337424572557211#epoch 350, loss 0.007628156337887049#epoch 400, loss 0.004350822884589434#epoch 450, loss 0.0024815555661916733#epoch 500, loss 0.0014153871452435851#epoch 550, loss 0.000807293108664453#epoch 600, loss 0.00046044986811466515#epoch 650, loss 0.00026261876337230206#epoch 700, loss 0.0001497901976108551#epoch 750, loss 8.543623698642477e-05#epoch 800, loss 4.8729089030530304e-05#epoch 900, loss 1.58514467329951e-05#epoch 950, loss 9.042541933013126e-06#epoch 1000, loss 5.158052317710826e-06predicted = model(torch.from_numpy(x_train).requires_grad_()).data.numpy()predicted#array([[ 0.9957756],# [ 2.9963837],# [ 4.996992 ],# [ 6.9976 ],# [ 8.998208 ],# [10.9988165],# [12.999424 ],# [15.000032 ],# [17.00064 ],# [19.00125 ],# [21.001858 ]], dtype=float32)torch.save(model.state_dict(), 'model.pkl')model.load_state_dict(torch.load('model.pkl'))#import torchimport torch.nn as nnimport numpy as npclass LinearRegressionModel(nn.Module): def __init__(self, input_dim, output_dim): super(LinearRegressionModel, self).__init__() self.linear = nn.Linear(input_dim, output_dim) def forward(self, x): out = self.linear(x) return outinput_dim = 1output_dim = 1model = LinearRegressionModel(input_dim, output_dim)device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")model.to(device)criterion = nn.MSELoss()learning_rate = 0.01optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)epochs = 1000for epoch in range(epochs): epoch += 1 inputs = torch.from_numpy(x_train).to(device) labels = torch.from_numpy(y_train).to(device) optimizer.zero_grad() outputs = model(inputs) loss = criterion(outputs, labels) loss.backward() optimizer.step() if epoch % 50 == 0: print('epoch {}, loss {}'.format(epoch, loss.item()))#epoch 50, loss 0.057580433785915375#epoch 100, loss 0.03284168243408203#epoch 150, loss 0.01873171515762806#epoch 200, loss 0.010683886706829071#epoch 250, loss 0.006093675270676613#epoch 300, loss 0.0034756092354655266#epoch 350, loss 0.0019823340699076653#epoch 400, loss 0.0011306683300063014#epoch 450, loss 0.0006449012435041368#epoch 500, loss 0.0003678193606901914#epoch 550, loss 0.0002097855758620426#epoch 600, loss 0.00011965946032432839#epoch 650, loss 6.825226591899991e-05#epoch 700, loss 3.892400854965672e-05#epoch 750, loss 2.2203324988367967e-05#epoch 800, loss 1.2662595509027597e-05#epoch 850, loss 7.223141892609419e-06#epoch 900, loss 4.118806373298867e-06#epoch 950, loss 2.349547230551252e-06#epoch 1000, loss 1.3400465377344517e-06来源地址:https://blog.csdn.net/weixin_45891612/article/details/129567378

--结束END--

本文标题: PyTorch 之 简介、相关软件框架、基本使用方法、tensor 的几种形状和 autograd 机制

本文链接: https://www.lsjlt.com/news/395308.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-03-01

2024-03-01

2024-03-01

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0