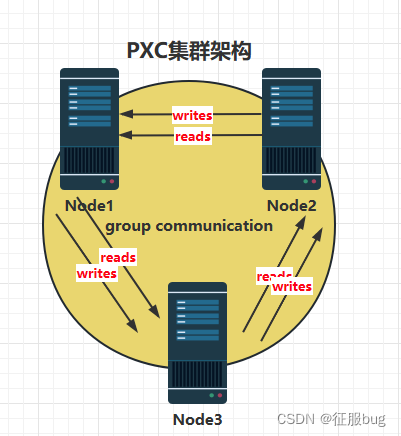

Mysql部署PXC集群 一,PXC了解 1.PXC介绍 Percona XtraDB Cluster(简称PXC) 是基于Galera的mysql高可用集群解决方案 Galera Cluste

Percona XtraDB Cluster(简称PXC)

PXC集群主要由两部分组成:Percona Server with XtraDB(数据存储插件)和 Write Set Replication patches(同步、多主复制插件)

官网:Http://galeracluster.com

数据强一致性,无同步延迟(写入主服务器的数据,所有从服务器必须马上也得有)

没有主从切换操作,无需使用虚拟IP(无需一主多从的结构,无需vip地址)

支持InnoDB存储引擎

部署使用简单

支持节点自动加入,无需手动拷贝数据(服务器会自动同步宕机期间的数据,无需手动配置)

| IP地址 | 主机名 |

|---|---|

| 192.168.2.1 | my01 |

| 192.168.2.2 | my02 |

| 192.168.2.3 | my03 |

每一台都需要做

[root@localhost ~]# hostnamectl set-hostname my01[root@localhost ~]# bash[root@my01 ~]# vi /etc/hosts192.168.2.1 my01192.168.2.2 my02192.168.2.3 my03percona-xtrabackup和Percona-XtraDB-Cluster登录下面地址找到相关版本来进行下载软件包下载地址:https://www.percona.com/downloadsqpress-1.1-14.11.x86_64.rpm需要使用wget来下载| 软件 | 作用 |

|---|---|

| percona-xtrabackup-24-2.4.28-1.el7.x86_64.rpm | 在线热备程序 |

| qpress-1.1-14.11.x86_64.rpm | 递归压缩程序 |

| Percona-XtraDB-Cluster-5.7.41-31.65-r654-el7-x86_64-bundle.tar | 集群服务程序 |

[root@my01 ~]# cd /usr/local/src/[root@my01 src]# wget ftp://ftp.pbone.net/mirror/ftp5.gwdg.de/pub/opensuse/repositories/home%3A/AndreasStieger%3A/branches%3A/Archiving/RedHat_RHEL-6/x86_64/qpress-1.1-14.11.x86_64.rpm[root@my01 src]# lspercona-xtrabackup-24-2.4.28-1.el7.x86_64.rpm Percona-XtraDB-Cluster-5.7.41-31.65-r654-el7-x86_64-bundle.tar qpress-1.1-14.11.x86_64.rpm#上传[root@my01 src]# scp -r /usr/local/src/ 192.168.2.2:/usr/local/[root@my01 src]# scp -r /usr/local/src/ 192.168.2.3:/usr/local/[root@my01 per-cluster]# yum -y install openssl[root@my01 per-cluster]# yum -y install openssl-devel[root@my01 per-cluster]# yum -y install socat[root@my01 src]# yum -y localinstall percona-xtrabackup-24-2.4.28-1.el7.x86_64.rpm[root@my01 src]# yum -y localinstall qpress-1.1-14.11.x86_64.rpm[root@my01 src]# mkdir per-cluster[root@my01 src]# tar -xf Percona-XtraDB-Cluster-5.7.41-31.65-r654-el7-x86_64-bundle.tar -C per-cluster/[root@my01 src]# cd per-cluster/[root@my01 per-cluster]# lsPercona-XtraDB-Cluster-57-5.7.41-31.65.1.el7.x86_64.rpm Percona-XtraDB-Cluster-garbd-57-5.7.41-31.65.1.el7.x86_64.rpmPercona-XtraDB-Cluster-57-debuginfo-5.7.41-31.65.1.el7.x86_64.rpm Percona-XtraDB-Cluster-server-57-5.7.41-31.65.1.el7.x86_64.rpmPercona-XtraDB-Cluster-client-57-5.7.41-31.65.1.el7.x86_64.rpm Percona-XtraDB-Cluster-shared-57-5.7.41-31.65.1.el7.x86_64.rpmPercona-XtraDB-Cluster-devel-57-5.7.41-31.65.1.el7.x86_64.rpm Percona-XtraDB-Cluster-shared-compat-57-5.7.41-31.65.1.el7.x86_64.rpmPercona-XtraDB-Cluster-full-57-5.7.41-31.65.1.el7.x86_64.rpm Percona-XtraDB-Cluster-test-57-5.7.41-31.65.1.el7.x86_64.rpm[root@my01 per-cluster]# rpm -ivh ./* --nodeps --force[root@my01 per-cluster]# vi /etc/percona-xtradb-cluster.conf.d/mysqld.cnf# Template my.cnf for PXC# Edit to your requirements.[client]Socket=/var/lib/mysql/mysql.sock[mysqld]server-id=1#只需要修改这里 保证每台都不同datadir=/var/lib/mysqlsocket=/var/lib/mysql/mysql.socpid-file=/var/run/mysqld/mysqld.pidlog-binlog_slave_updatesexpire_logs_days=7# Disabling symbolic-links is recommended to prevent assorted security riskssymbolic-links=0配置要求

wsrep_cluster_address=GComm:// #集群成员列表,3台必须相同wsrep_node_address=192.168.70.63 #本机IP地址wsrep_cluster_name=pxc-cluster #集群名称,可自定义,3台必须相同wsrep_node_name=pxc-cluster-node #本机主机名wsrep_sst_auth="sstuser:s3cretPass" #SST数据同步用户授权,3台必须相同 [root@my03 per-cluster]# vi /etc/percona-xtradb-cluster.conf.d/wsrep.cnf[mysqld]# Path to Galera librarywsrep_provider=/usr/lib64/galera3/libgalera_smm.so# Cluster connection URL contains IPs of nodes#If no IP is found, this implies that a new cluster needs to be created,#in order to do that you need to bootstrap this nodewsrep_cluster_address=gcomm://192.168.2.1,192.168.2.2,192.168.2.3# In order for Galera to work correctly binlog fORMat should be ROWbinlog_format=ROW# MyISAM storage engine has only experimental supportdefault_storage_engine=InnoDB# Slave thread to usewsrep_slave_threads= 8wsrep_log_conflicts# This changes how InnoDB autoincrement locks are managed and is a requirement for Galerainnodb_autoinc_lock_mode=2# Node IP addresswsrep_node_address=192.168.2.1# Cluster namewsrep_cluster_name=pxc-cluster#If wsrep_node_name is not specified, then system hostname will be usedwsrep_node_name=my01#pxc_strict_mode allowed values: DISABLED,PERMISSIVE,ENFORCING,MASTERpxc_strict_mode=ENFORCING# SST methodwsrep_sst_method=xtrabackup-v2#Authentication for SST methodwsrep_sst_auth="sstuser:1234.Com" 将配置文件上传到其他服务器

[root@my01 per-cluster]# scp -p /etc/percona-xtradb-cluster.conf.d/wsrep.cnf 192.168.2.2:/etc/percona-xtradb-cluster.conf.d/root@192.168.2.2's passWord:wsrep.cnf100% 1081 1.1KB/s 00:00[root@my01 per-cluster]# scp -p /etc/percona-xtradb-cluster.conf.d/wsrep.cnf 192.168.2.3:/etc/percona-xtradb-cluster.conf.d/root@192.168.2.3's password:wsrep.cnf [root@my02 per-cluster]# vi /etc/percona-xtradb-cluster.conf.d/wsrep.cnfwsrep_node_address=192.168.2.2wsrep_node_name=my02[root@my03 per-cluster]# vi /etc/percona-xtradb-cluster.conf.d/wsrep.cnfwsrep_node_address=192.168.2.3wsrep_node_name=my03[root@my01 per-cluster]# systemctl start mysql@bootstrap.service[root@my01 per-cluster]# grep pass /var/log/mysqld.log2023-05-30T13:54:20.274979Z 1 [Note] A temporary password is generated for root@localhost: L(sz/!ua,6h0[root@my01 per-cluster]# mysql -uroot -p'L(sz/!ua,6h0'mysql> alter user root@"localhost" identified by "123456";Query OK, 0 rows affected (0.01 sec) mysql> grant reload,lock tables,replication client,process on *.* to sstuser@"localhost" identified by "12345.Com";Query OK, 0 rows affected, 1 warning (0.00 sec) 添加授权用户,数据会自动同步到主机my02和03上。reload装载数据的权限;lock tables锁表的权限;replication client查看服务状态的权限;process管理服务的权限(查看进程信息);授权用户和密码必须是配置文件中指定的。

[root@my02 per-cluster]# systemctl start mysqlmysql> show status like "%wsrep%"; wsrep_incoming_addresses | 192.168.2.3:3306,192.168.2.1:3306,192.168.2.2:3306 |#集群| wsrep_cluster_weight | 3 || wsrep_desync_count | 0 || wsrep_evs_delayed | || wsrep_evs_evict_list | || wsrep_evs_repl_latency | 0/0/0/0/0 || wsrep_evs_state | OPERATIONAL || wsrep_gcomm_uuid | 242efe13-ff69-11ed-9a0c-de8812f5f691 || wsrep_cluster_conf_id | 7 || wsrep_cluster_size | 3 |#集群服务器台数| wsrep_cluster_status | Primary |#集群状态| wsrep_connected | ON | #连接状态| wsrep_ready | ON | #服务状态gcs/src/gcs_group.cpp:gcs_group_handle_join_msg():766: Will never receive state. 解决:

which: no socat in (/usr/sbin:/sbin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin) 2023-05-31T02:58:56.691238Z WSREP_SST: [ERROR] ******************* FATAL ERROR ********************** 2023-05-31T02:58:56.691850Z WSREP_SST: [ERROR] socat not found in path: /usr/sbin:/sbin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin 2023-05-31T02:58:56.692347Z WSREP_SST: [ERROR] ******************************************************2023-05-31T02:58:56.692551Z 0 [ERROR] WSREP: Failed to read 'ready ' from: wsrep_sst_xtrabackup-v2 --role 'joiner' --address '192.168.2.2' --datadir '/var/lib/mysql/' --defaults-file '/etc/my.cnf' --defaults-group-suffix '' --parent '18678' --mysqld-version '5.7.25-28-57' '' Read: '(null)'2023-05-31T02:58:56.692574Z 0 [ERROR] WSREP: Process completed with error: wsrep_sst_xtrabackup-v2 --role 'joiner' --address '192.168.2.2' --datadir '/var/lib/mysql/' --defaults-file '/etc/my.cnf' --defaults-group-suffix '' --parent '18678' --mysqld-version '5.7.25-28-57' '' : 2 (No such file or directory)2023-05-31T02:58:56.692617Z 2 [ERROR] WSREP: Failed to prepare for 'xtrabackup-v2' SST. Unrecoverable.2023-05-31T02:58:56.692622Z 2 [ERROR] Aborting 解决:

yum -y install socatfailed to open gcomm backend connection: 110解决:

查看各主机的主机名与ip地址的映射关系

rectory)

2023-05-31T02:58:56.692617Z 2 [ERROR] WSREP: Failed to prepare for ‘xtrabackup-v2’ SST. Unrecoverable.

2023-05-31T02:58:56.692622Z 2 [ERROR] Aborting

解决:```bash yum -y install socatfailed to open gcomm backend connection: 110解决:

查看各主机的主机名与ip地址的映射关系

查看各主机之间 wsrep.cnf 配置文件中集群名称是否一致

来源地址:https://blog.csdn.net/weixin_53678904/article/details/130966660

--结束END--

本文标题: MySQL部署PXC集群-全网最详细

本文链接: https://www.lsjlt.com/news/420033.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

2024-05-14

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0