☘️前言 在正式安装前,应确保已经安装好了 NVIDIA CUDA™ Toolkit,如果没有安装可以参考:NVIDIA CUDA Installation Guide 对于 TensorRT 来说,

在正式安装前,应确保已经安装好了 NVIDIA CUDA™ Toolkit,如果没有安装可以参考:NVIDIA CUDA Installation Guide

对于 TensorRT 来说,目前 cuDNN 是一个可选项,他现在只用来加速很少的层。

可以采用下面三种模式来安装 TensorRT:

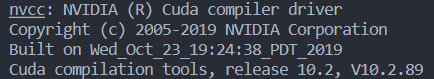

nvcc -V 输出如下:

针对 8.0 前的版本使用以下指令查看cudnn的版本:

cat /usr/local/cuda/include/cudnn.h | grep CUDNN_MAJOR -A 2输出:

#define CUDNN_MAJOR 5#define CUDNN_MINOR 1#define CUDNN_PATCHLEVEL 10--#define CUDNN_VERSioN (CUDNN_MAJOR * 1000 + CUDNN_MINOR * 100 + CUDNN_PATCHLEVEL)#include "driver_types.h"0 以上版本查看方式为

cat /usr/local/cuda/include/cudnn_version.h | grep CUDNN_MAJOR -A 2输出:

#define CUDNN_MAJOR 8#define CUDNN_MINOR 0#define CUDNN_PATCHLEVEL 4--#define CUDNN_VERSION (CUDNN_MAJOR * 1000 + CUDNN_MINOR * 100 + CUDNN_PATCHLEVEL)#endif 首先安装 CUDA,参考:https://docs.nvidia.com/cuda/cuda-installation-guide-linux/index.html

接着安装 cuDNN,参考:https://docs.nvidia.com/deeplearning/cudnn/install-guide/index.html

下载与您正在使用的 ubuntu 版本和 CPU 架构匹配的 TensorRT 本地存储库文件。

最后安装使用 Debian 方式安装 TensorRT,注意替换 ubuntu 、 cuda 、cpu架构版本

os="ubuntuxx04"tag="8.x.x-cuda-x.x"sudo dpkg -i nv-tensorrt-local-repo-${os}-${tag}_1.0-1_amd64.debsudo cp /var/nv-tensorrt-local-repo-${os}-${tag}/*-keyring.gpg /usr/share/keyrings/sudo apt-get updateFor full runtime

sudo apt-get install tensorrtFor the lean runtime only, instead of tensorrt

sudo apt-get install libnvinfer-lean8 sudo apt-get install libnvinfer-vc-plugin8For lean runtime Python package

sudo apt-get install python3-libnvinfer-leanFor dispatch runtime Python package

sudo apt-get install python3-libnvinfer-dispatchFor all TensorRT Python packages

Python3 -m pip install numpy sudo apt-get install python3-libnvinfer-dev The following additional packages will be installed

python3-libnvinferpython3-libnvinfer-leanpython3-libnvinfer-dispatchIf you want to install Python packages for the lean or dispatch runtime only, specify these individually rather than installing the dev package.

If you want to use TensorRT with the UFF converter to convert models from TensorFlow

python3 -m pip install protobuf sudo apt-get install uff-converter-tfThe graphsurgeon-tf package will also be installed with this command.

If you want to run samples that require onnx-graphsurgeon or use the Python module for your own project

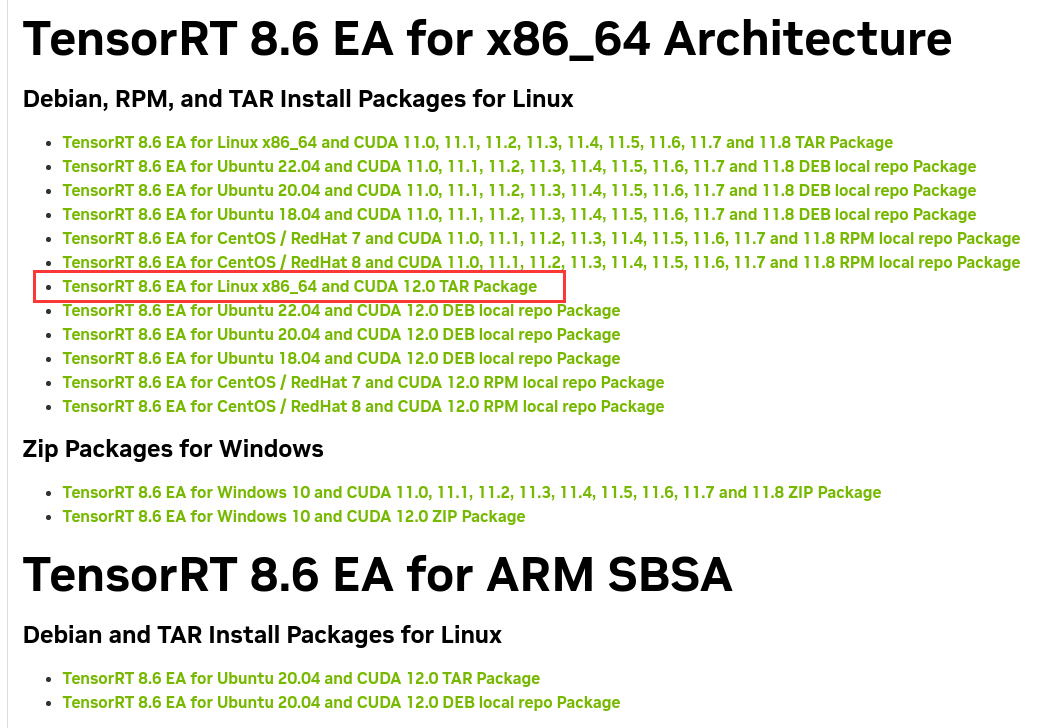

python3 -m pip install numpy onnx sudo apt-get install onnx-graphsurgeondpkg-query -W tensorrt输出如下:

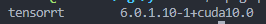

这里更新了服务器,服务器环境为

EA 版本代表抢先体验(在正式发布之前)。

GA 代表通用性。 表示稳定版,经过全面测试。

解压

tar -xzvf TensorRT-8.6.0.12.linux.x86_64-gnu.cuda-12.0.tar.gz接着进入该文件

添加环境变量

vim ~/.bashrcexport LD_LIBRARY_PATH=解压TensorRT的路径/TensorRT-8.6.0.12/lib:$LD_LIBRARY_PATHsource ~/.bashrc这里笔者使用的是 zsh

所以上面命令修改为

vim ~/.zshrcexport LD_LIBRARY_PATH=解压TensorRT的路径/TensorRT-8.6.0.12/lib:$LD_LIBRARY_PATHsource ~/.zshrc接着依次安装

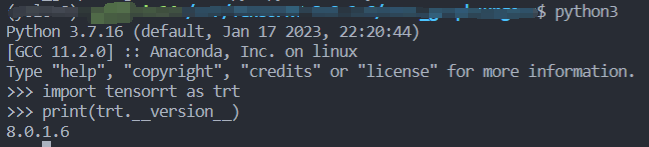

cd TensorRT-${version}/uffpython3 -m pip install uff-0.6.9-py2.py3-none-any.whl#以下为按需安装cd TensorRT-${version}/uffpython3 -m pip install uff-0.6.9-py2.py3-none-any.whlcd TensorRT-${version}/graphsurgeonpython3 -m pip install graphsurgeon-0.4.6-py2.py3-none-any.whlcd TensorRT-${version}/onnx_graphsurgeonpython3 -m pip install onnx_graphsurgeon-0.3.12-py2.py3-none-any.whl

cd samples/sampleOnnxMNIST/make -j8cd ../../binbin ./sample_onnx_mnist输出如下:

&&&& RUNNING TensorRT.sample_onnx_mnist [TensorRT v8600] # ./sample_onnx_mnist[04/06/2023-11:00:53] [I] Building and running a GPU inference engine for Onnx MNIST[04/06/2023-11:00:53] [I] [TRT] [MemUsageChange] Init CUDA: CPU +362, GPU +0, now: CPU 367, GPU 375 (MiB)[04/06/2023-11:00:56] [I] [TRT] [MemUsageChange] Init builder kernel library: CPU +1209, GPU +264, now: CPU 1652, GPU 639 (MiB)[04/06/2023-11:00:56] [W] [TRT] CUDA lazy loading is not enabled. Enabling it can significantly reduce device memory usage and speed up TensorRT initialization. See "Lazy Loading" section of CUDA documentation https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#lazy-loading[04/06/2023-11:00:56] [I] [TRT] ----------------------------------------------------------------[04/06/2023-11:00:56] [I] [TRT] Input filename: ../../../data/mnist/mnist.onnx[04/06/2023-11:00:56] [I] [TRT] ONNX IR version: 0.0.3[04/06/2023-11:00:56] [I] [TRT] Opset version: 8[04/06/2023-11:00:56] [I] [TRT] Producer name: CNTK[04/06/2023-11:00:56] [I] [TRT] Producer version: 2.5.1[04/06/2023-11:00:56] [I] [TRT] Domain: ai.cntk[04/06/2023-11:00:56] [I] [TRT] Model version: 1[04/06/2023-11:00:56] [I] [TRT] Doc string: [04/06/2023-11:00:56] [I] [TRT] ----------------------------------------------------------------[04/06/2023-11:00:56] [W] [TRT] onnx2trt_utils.cpp:374: Your ONNX model has been generated with INT64 weights, while TensorRT does not natively support INT64. Attempting to cast down to INT32.[04/06/2023-11:00:56] [I] [TRT] Graph optimization time: 0.000296735 seconds.[04/06/2023-11:00:56] [I] [TRT] Local timing cache in use. Profiling results in this builder pass will not be stored.[04/06/2023-11:00:57] [I] [TRT] Detected 1 inputs and 1 output network tensors.[04/06/2023-11:00:57] [I] [TRT] Total Host Persistent Memory: 24224[04/06/2023-11:00:57] [I] [TRT] Total Device Persistent Memory: 0[04/06/2023-11:00:57] [I] [TRT] Total Scratch Memory: 0[04/06/2023-11:00:57] [I] [TRT] [MemUsageStats] Peak memory usage of TRT CPU/GPU memory allocators: CPU 0 MiB, GPU 4 MiB[04/06/2023-11:00:57] [I] [TRT] [BlockAssignment] Started assigning block shifts. This will take 6 steps to complete.[04/06/2023-11:00:57] [I] [TRT] [BlockAssignment] AlGorithm ShiftNTopDown took 0.008767ms to assign 3 blocks to 6 nodes requiring 32256 bytes.[04/06/2023-11:00:57] [I] [TRT] Total Activation Memory: 31744[04/06/2023-11:00:57] [I] [TRT] [MemUsageChange] TensorRT-managed allocation in building engine: CPU +0, GPU +4, now: CPU 0, GPU 4 (MiB)[04/06/2023-11:00:57] [I] [TRT] Loaded engine size: 0 MiB[04/06/2023-11:00:57] [I] [TRT] [MemUsageChange] TensorRT-managed allocation in engine deserialization: CPU +0, GPU +0, now: CPU 0, GPU 0 (MiB)[04/06/2023-11:00:57] [I] [TRT] [MemUsageChange] TensorRT-managed allocation in IExecutionContext creation: CPU +0, GPU +0, now: CPU 0, GPU 0 (MiB)[04/06/2023-11:00:57] [W] [TRT] CUDA lazy loading is not enabled. Enabling it can significantly reduce device memory usage and speed up TensorRT initialization. See "Lazy Loading" section of CUDA documentation Https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#lazy-loading[04/06/2023-11:00:57] [I] Input:[04/06/2023-11:00:57] [I] @@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@+ @@@@@@@@@@@@@@@@@@@@@@@@@@. @@@@@@@@@@@@@@@@@@@@@@@@@@- @@@@@@@@@@@@@@@@@@@@@@@@@# @@@@@@@@@@@@@@@@@@@@@@@@@# *@@@@@@@@@@@@@@@@@@@@@@@@@ :@@@@@@@@@@@@@@@@@@@@@@@@@= .@@@@@@@@@@@@@@@@@@@@@@@@@# %@@@@@@@@@@@@@@@@@@@@@@@@% .@@@@@@@@@@@@@@@@@@@@@@@@@% %@@@@@@@@@@@@@@@@@@@@@@@@% %@@@@@@@@@@@@@@@@@@@@@@@@@= +@@@@@@@@@@@@@@@@@@@@@@@@@* -@@@@@@@@@@@@@@@@@@@@@@@@@* @@@@@@@@@@@@@@@@@@@@@@@@@@ @@@@@@@@@@@@@@@@@@@@@@@@@@ *@@@@@@@@@@@@@@@@@@@@@@@@@ *@@@@@@@@@@@@@@@@@@@@@@@@@ *@@@@@@@@@@@@@@@@@@@@@@@@@ *@@@@@@@@@@@@@@@@@@@@@@@@@* @@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@[04/06/2023-11:00:57] [I] Output:[04/06/2023-11:00:57] [I] Prob 0 0.0000 Class 0: [04/06/2023-11:00:57] [I] Prob 1 1.0000 Class 1: **********[04/06/2023-11:00:57] [I] Prob 2 0.0000 Class 2: [04/06/2023-11:00:57] [I] Prob 3 0.0000 Class 3: [04/06/2023-11:00:57] [I] Prob 4 0.0000 Class 4: [04/06/2023-11:00:57] [I] Prob 5 0.0000 Class 5: [04/06/2023-11:00:57] [I] Prob 6 0.0000 Class 6: [04/06/2023-11:00:57] [I] Prob 7 0.0000 Class 7: [04/06/2023-11:00:57] [I] Prob 8 0.0000 Class 8: [04/06/2023-11:00:57] [I] Prob 9 0.0000 Class 9: [04/06/2023-11:00:57] [I] &&&& PASSED TensorRT.sample_onnx_mnist [TensorRT v8600] # ./sample_onnx_mnisthttps://docs.nvidia.com/deeplearning/tensorrt/install-guide/index.html

https://zhuanlan.zhihu.com/p/379287312

https://blog.csdn.net/qq_34859576/article/details/119108275

https://blog.csdn.net/qq_43644413/article/details/124900671

来源地址:https://blog.csdn.net/BCblack/article/details/129985142

--结束END--

本文标题: TensorRT 安装与测试

本文链接: http://www.lsjlt.com/news/422652.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

2024-06-11

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0