Python 官方文档:入门教程 => 点击学习

目录什么是facenetInception-ResNetV11、Stem的结构:2、Inception-resnet-A的结构:3、Inception-resnet-B的结构:4、I

最近学了我最喜欢的mtcnn,可是光有人脸有啥用啊,咱得知道who啊,开始facenet提取特征之旅。

谷歌人脸检测算法,发表于 CVPR 2015,利用相同人脸在不同角度等姿态的照片下有高内聚性,不同人脸有低耦合性,提出使用 cnn + triplet mining 方法,在 LFW 数据集上准确度达到 99.63%。

通过 CNN 将人脸映射到欧式空间的特征向量上,实质上:不同图片人脸特征的距离较大;通过相同个体的人脸的距离,总是小于不同个体的人脸这一先验知识训练网络。

测试时只需要计算人脸特征EMBEDDING,然后计算距离使用阈值即可判定两张人脸照片是否属于相同的个体。

简单来讲,在使用阶段,facenet即是:

1、输入一张人脸图片

2、通过深度学习网络提取特征

3、L2标准化

4、得到128维特征向量。

代码下载链接:https://pan.baidu.com/s/1T2b5u2mZ9yMtKt3TvLxTaw

提取码:xmg0

Inception-ResNetV1是facenet使用的主干网络。

它的结构很有意思!

如图所示为整个网络的主干结构:

可以看到里面的结构分为几个重要的部分

1、stem

2、Inception-resnet-A

3、Inception-resnet-B

4、Inception-resnet-C

在facenet里,它的Input为160x160x3大小,输入后进行:

两次卷积 -> 一次最大池化 -> 两次卷积

python实现代码如下:

inputs = Input(shape=input_shape)

# 160,160,3 -> 77,77,64

x = conv2d_bn(inputs, 32, 3, strides=2, padding='valid', name='Conv2d_1a_3x3')

x = conv2d_bn(x, 32, 3, padding='valid', name='Conv2d_2a_3x3')

x = conv2d_bn(x, 64, 3, name='Conv2d_2b_3x3')

# 77,77,64 -> 38,38,64

x = MaxPooling2D(3, strides=2, name='MaxPool_3a_3x3')(x)

# 38,38,64 -> 17,17,256

x = conv2d_bn(x, 80, 1, padding='valid', name='Conv2d_3b_1x1')

x = conv2d_bn(x, 192, 3, padding='valid', name='Conv2d_4a_3x3')

x = conv2d_bn(x, 256, 3, strides=2, padding='valid', name='Conv2d_4b_3x3')

Inception-resnet-A的结构分为四个分支

1、未经处理直接输出

2、经过一次1x1的32通道的卷积处理

3、经过一次1x1的32通道的卷积处理和一次3x3的32通道的卷积处理

4、经过一次1x1的32通道的卷积处理和两次3x3的32通道的卷积处理

234步的结果堆叠后j进行一次卷积,并与第一步的结果相加,实质上这是一个残差网络结构。

实现代码如下:

branch_0 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 32, 3, name=name_fmt('Conv2d_0b_3x3', 1))

branch_2 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_0a_1x1', 2))

branch_2 = conv2d_bn(branch_2, 32, 3, name=name_fmt('Conv2d_0b_3x3', 2))

branch_2 = conv2d_bn(branch_2, 32, 3, name=name_fmt('Conv2d_0c_3x3', 2))

branches = [branch_0, branch_1, branch_2]

mixed = Concatenate(axis=channel_axis, name=name_fmt('Concatenate'))(branches)

up = conv2d_bn(mixed,K.int_shape(x)[channel_axis],1,activation=None,use_bias=True,

name=name_fmt('Conv2d_1x1'))

up = Lambda(scaling,

output_shape=K.int_shape(up)[1:],

arguments={'scale': scale})(up)

x = add([x, up])

if activation is not None:

x = Activation(activation, name=name_fmt('Activation'))(x)

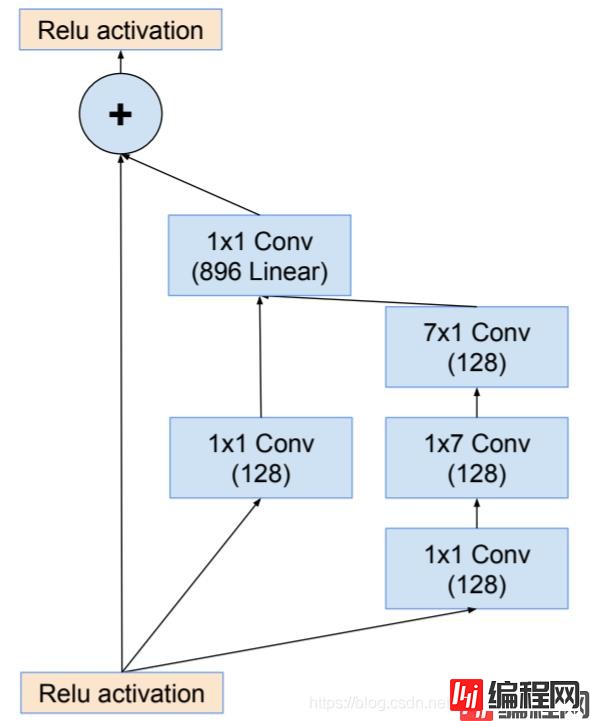

Inception-resnet-B的结构分为四个分支

1、未经处理直接输出

2、经过一次1x1的128通道的卷积处理

3、经过一次1x1的128通道的卷积处理、一次1x7的128通道的卷积处理和一次7x1的128通道的卷积处理

23步的结果堆叠后j进行一次卷积,并与第一步的结果相加,实质上这是一个残差网络结构。

实现代码如下:

branch_0 = conv2d_bn(x, 128, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 128, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 128, [1, 7], name=name_fmt('Conv2d_0b_1x7', 1))

branch_1 = conv2d_bn(branch_1, 128, [7, 1], name=name_fmt('Conv2d_0c_7x1', 1))

branches = [branch_0, branch_1]

mixed = Concatenate(axis=channel_axis, name=name_fmt('Concatenate'))(branches)

up = conv2d_bn(mixed,K.int_shape(x)[channel_axis],1,activation=None,use_bias=True,

name=name_fmt('Conv2d_1x1'))

up = Lambda(scaling,

output_shape=K.int_shape(up)[1:],

arguments={'scale': scale})(up)

x = add([x, up])

if activation is not None:

x = Activation(activation, name=name_fmt('Activation'))(x)

Inception-resnet-B的结构分为四个分支

1、未经处理直接输出

2、经过一次1x1的128通道的卷积处理

3、经过一次1x1的192通道的卷积处理、一次1x3的192通道的卷积处理和一次3x1的128通道的卷积处理

23步的结果堆叠后j进行一次卷积,并与第一步的结果相加,实质上这是一个残差网络结构。

实现代码如下:

branch_0 = conv2d_bn(x, 192, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 192, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 192, [1, 3], name=name_fmt('Conv2d_0b_1x3', 1))

branch_1 = conv2d_bn(branch_1, 192, [3, 1], name=name_fmt('Conv2d_0c_3x1', 1))

branches = [branch_0, branch_1]

mixed = Concatenate(axis=channel_axis, name=name_fmt('Concatenate'))(branches)

up = conv2d_bn(mixed,K.int_shape(x)[channel_axis],1,activation=None,use_bias=True,

name=name_fmt('Conv2d_1x1'))

up = Lambda(scaling,

output_shape=K.int_shape(up)[1:],

arguments={'scale': scale})(up)

x = add([x, up])

if activation is not None:

x = Activation(activation, name=name_fmt('Activation'))(x)

from functools import partial

from keras.models import Model

from keras.layers import Activation

from keras.layers import BatchNORMalization

from keras.layers import Concatenate

from keras.layers import Conv2D

from keras.layers import Dense

from keras.layers import Dropout

from keras.layers import GlobalAveragePooling2D

from keras.layers import Input

from keras.layers import Lambda

from keras.layers import MaxPooling2D

from keras.layers import add

from keras import backend as K

def scaling(x, scale):

return x * scale

def _generate_layer_name(name, branch_idx=None, prefix=None):

if prefix is None:

return None

if branch_idx is None:

return '_'.join((prefix, name))

return '_'.join((prefix, 'Branch', str(branch_idx), name))

def conv2d_bn(x,filters,kernel_size,strides=1,padding='same',activation='relu',use_bias=False,name=None):

x = Conv2D(filters,

kernel_size,

strides=strides,

padding=padding,

use_bias=use_bias,

name=name)(x)

if not use_bias:

x = BatchNormalization(axis=3, momentum=0.995, epsilon=0.001,

scale=False, name=_generate_layer_name('BatchNorm', prefix=name))(x)

if activation is not None:

x = Activation(activation, name=_generate_layer_name('Activation', prefix=name))(x)

return x

def _inception_resnet_block(x, scale, block_type, block_idx, activation='relu'):

channel_axis = 3

if block_idx is None:

prefix = None

else:

prefix = '_'.join((block_type, str(block_idx)))

name_fmt = partial(_generate_layer_name, prefix=prefix)

if block_type == 'Block35':

branch_0 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 32, 3, name=name_fmt('Conv2d_0b_3x3', 1))

branch_2 = conv2d_bn(x, 32, 1, name=name_fmt('Conv2d_0a_1x1', 2))

branch_2 = conv2d_bn(branch_2, 32, 3, name=name_fmt('Conv2d_0b_3x3', 2))

branch_2 = conv2d_bn(branch_2, 32, 3, name=name_fmt('Conv2d_0c_3x3', 2))

branches = [branch_0, branch_1, branch_2]

elif block_type == 'Block17':

branch_0 = conv2d_bn(x, 128, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 128, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 128, [1, 7], name=name_fmt('Conv2d_0b_1x7', 1))

branch_1 = conv2d_bn(branch_1, 128, [7, 1], name=name_fmt('Conv2d_0c_7x1', 1))

branches = [branch_0, branch_1]

elif block_type == 'Block8':

branch_0 = conv2d_bn(x, 192, 1, name=name_fmt('Conv2d_1x1', 0))

branch_1 = conv2d_bn(x, 192, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 192, [1, 3], name=name_fmt('Conv2d_0b_1x3', 1))

branch_1 = conv2d_bn(branch_1, 192, [3, 1], name=name_fmt('Conv2d_0c_3x1', 1))

branches = [branch_0, branch_1]

mixed = Concatenate(axis=channel_axis, name=name_fmt('Concatenate'))(branches)

up = conv2d_bn(mixed,K.int_shape(x)[channel_axis],1,activation=None,use_bias=True,

name=name_fmt('Conv2d_1x1'))

up = Lambda(scaling,

output_shape=K.int_shape(up)[1:],

arguments={'scale': scale})(up)

x = add([x, up])

if activation is not None:

x = Activation(activation, name=name_fmt('Activation'))(x)

return x

def InceptionResNetV1(input_shape=(160, 160, 3),

classes=128,

dropout_keep_prob=0.8):

channel_axis = 3

inputs = Input(shape=input_shape)

# 160,160,3 -> 77,77,64

x = conv2d_bn(inputs, 32, 3, strides=2, padding='valid', name='Conv2d_1a_3x3')

x = conv2d_bn(x, 32, 3, padding='valid', name='Conv2d_2a_3x3')

x = conv2d_bn(x, 64, 3, name='Conv2d_2b_3x3')

# 77,77,64 -> 38,38,64

x = MaxPooling2D(3, strides=2, name='MaxPool_3a_3x3')(x)

# 38,38,64 -> 17,17,256

x = conv2d_bn(x, 80, 1, padding='valid', name='Conv2d_3b_1x1')

x = conv2d_bn(x, 192, 3, padding='valid', name='Conv2d_4a_3x3')

x = conv2d_bn(x, 256, 3, strides=2, padding='valid', name='Conv2d_4b_3x3')

# 5x Block35 (Inception-ResNet-A block):

for block_idx in range(1, 6):

x = _inception_resnet_block(x,scale=0.17,block_type='Block35',block_idx=block_idx)

# Reduction-A block:

# 17,17,256 -> 8,8,896

name_fmt = partial(_generate_layer_name, prefix='Mixed_6a')

branch_0 = conv2d_bn(x, 384, 3,strides=2,padding='valid',name=name_fmt('Conv2d_1a_3x3', 0))

branch_1 = conv2d_bn(x, 192, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1, 192, 3, name=name_fmt('Conv2d_0b_3x3', 1))

branch_1 = conv2d_bn(branch_1,256,3,strides=2,padding='valid',name=name_fmt('Conv2d_1a_3x3', 1))

branch_pool = MaxPooling2D(3,strides=2,padding='valid',name=name_fmt('MaxPool_1a_3x3', 2))(x)

branches = [branch_0, branch_1, branch_pool]

x = Concatenate(axis=channel_axis, name='Mixed_6a')(branches)

# 10x Block17 (Inception-ResNet-B block):

for block_idx in range(1, 11):

x = _inception_resnet_block(x,

scale=0.1,

block_type='Block17',

block_idx=block_idx)

# Reduction-B block

# 8,8,896 -> 3,3,1792

name_fmt = partial(_generate_layer_name, prefix='Mixed_7a')

branch_0 = conv2d_bn(x, 256, 1, name=name_fmt('Conv2d_0a_1x1', 0))

branch_0 = conv2d_bn(branch_0,384,3,strides=2,padding='valid',name=name_fmt('Conv2d_1a_3x3', 0))

branch_1 = conv2d_bn(x, 256, 1, name=name_fmt('Conv2d_0a_1x1', 1))

branch_1 = conv2d_bn(branch_1,256,3,strides=2,padding='valid',name=name_fmt('Conv2d_1a_3x3', 1))

branch_2 = conv2d_bn(x, 256, 1, name=name_fmt('Conv2d_0a_1x1', 2))

branch_2 = conv2d_bn(branch_2, 256, 3, name=name_fmt('Conv2d_0b_3x3', 2))

branch_2 = conv2d_bn(branch_2,256,3,strides=2,padding='valid',name=name_fmt('Conv2d_1a_3x3', 2))

branch_pool = MaxPooling2D(3,strides=2,padding='valid',name=name_fmt('MaxPool_1a_3x3', 3))(x)

branches = [branch_0, branch_1, branch_2, branch_pool]

x = Concatenate(axis=channel_axis, name='Mixed_7a')(branches)

# 5x Block8 (Inception-ResNet-C block):

for block_idx in range(1, 6):

x = _inception_resnet_block(x,

scale=0.2,

block_type='Block8',

block_idx=block_idx)

x = _inception_resnet_block(x,scale=1.,activation=None,block_type='Block8',block_idx=6)

# 平均池化

x = GlobalAveragePooling2D(name='AvgPool')(x)

x = Dropout(1.0 - dropout_keep_prob, name='Dropout')(x)

# 全连接层到128

x = Dense(classes, use_bias=False, name='Bottleneck')(x)

bn_name = _generate_layer_name('BatchNorm', prefix='Bottleneck')

x = BatchNormalization(momentum=0.995, epsilon=0.001, scale=False,

name=bn_name)(x)

# 创建模型

model = Model(inputs, x, name='inception_resnet_v1')

return model

利用OpenCV自带的cv2.CascadeClassifier检测人脸并实现人脸的比较:根目录摆放方式如下:

demo文件如下:

import numpy as np

import cv2

from net.inception import InceptionResNetV1

from keras.models import load_model

import face_recognition

#---------------------------------#

# 图片预处理

# 高斯归一化

#---------------------------------#

def pre_process(x):

if x.ndim == 4:

axis = (1, 2, 3)

size = x[0].size

elif x.ndim == 3:

axis = (0, 1, 2)

size = x.size

else:

raise ValueError('Dimension should be 3 or 4')

mean = np.mean(x, axis=axis, keepdims=True)

std = np.std(x, axis=axis, keepdims=True)

std_adj = np.maximum(std, 1.0/np.sqrt(size))

y = (x - mean) / std_adj

return y

#---------------------------------#

# l2标准化

#---------------------------------#

def l2_normalize(x, axis=-1, epsilon=1e-10):

output = x / np.sqrt(np.maximum(np.sum(np.square(x), axis=axis, keepdims=True), epsilon))

return output

#---------------------------------#

# 计算128特征值

#---------------------------------#

def calc_128_vec(model,img):

face_img = pre_process(img)

pre = model.predict(face_img)

pre = l2_normalize(np.concatenate(pre))

pre = np.reshape(pre,[1,128])

return pre

#---------------------------------#

# 获取人脸框

#---------------------------------#

def get_face_img(cascade,filepaths,margin):

aligned_images = []

img = cv2.imread(filepaths)

img = cv2.cvtColor(img,cv2.COLOR_BGRA2RGB)

faces = cascade.detectMultiScale(img,

scaleFactor=1.1,

minNeighbors=3)

(x, y, w, h) = faces[0]

print(x, y, w, h)

cropped = img[y-margin//2:y+h+margin//2,

x-margin//2:x+w+margin//2, :]

aligned = cv2.resize(cropped, (160, 160))

aligned_images.append(aligned)

return np.array(aligned_images)

#---------------------------------#

# 计算人脸距离

#---------------------------------#

def face_distance(face_encodings, face_to_compare):

if len(face_encodings) == 0:

return np.empty((0))

return np.linalg.norm(face_encodings - face_to_compare, axis=1)

if __name__ == "__main__":

cascade_path = './model/haarcascade_frontalface_alt2.xml'

cascade = cv2.CascadeClassifier(cascade_path)

image_size = 160

model = InceptionResNetV1()

# model.summary()

model_path = './model/facenet_keras.h5'

model.load_weights(model_path)

img1 = get_face_img(cascade,r"img/Larry_Page_0000.jpg",10)

img2 = get_face_img(cascade,r"img/Larry_Page_0001.jpg",10)

img3 = get_face_img(cascade,r"img/Mark_Zuckerberg_0000.jpg",10)

print(face_distance(calc_128_vec(model,img1),calc_128_vec(model,img2)))

print(face_distance(calc_128_vec(model,img2),calc_128_vec(model,img3)))

实现效果为:

[0.6534328]

[1.3536944]

以上就是Python神经网络facenet人脸检测及keras实现的详细内容,更多关于facenet人脸检测keras实现的资料请关注编程网其它相关文章!

--结束END--

本文标题: python神经网络facenet人脸检测及keras实现

本文链接: https://www.lsjlt.com/news/117701.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-03-01

2024-03-01

2024-03-01

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0