Python 官方文档:入门教程 => 点击学习

爬取链家二手房源信息import requests import re from bs4 import BeautifulSoup import csv url = ['https://cq.lianjia.com/ershoufang/

爬取链家二手房源信息

import requests

import re

from bs4 import BeautifulSoup

import csv

url = ['https://cq.lianjia.com/ershoufang/']

for i in range(2,101):

url.append('Https://cq.lianjia.com/ershoufang/pg%s/'%(str(i)))

# 模拟谷歌浏览器

headers = {'User-Agent': 'Mozilla/5.0 (windows NT 10.0; Win64; x64) AppleWEBKit/537.36 (Khtml, like Gecko) Chrome/69.0.3497.100 Safari/537.36'}

for u in url:

r = requests.get(u,headers=headers)

soup = BeautifulSoup(r.text,'lxml').find_all('li', class_='clear LOGCLICKDATA')

for i in soup:

ns = i.select('div[class="positionInfo"]')[0].get_text()

region = ns.split('-')[1].replace(' ','').encode('gbk')

rem = ns.split('-')[0].replace(' ','').encode('gbk')

ns = i.select('div[class="houseInfo"]')[0].get_text()

xiaoqu_name = ns.split('|')[0].replace(' ','').encode('gbk')

huxing = ns.split('|')[1].replace(' ','').encode('gbk')

pingfang = ns.split('|')[2].replace(' ','').encode('gbk')

chaoxiang = ns.split('|')[3].replace(' ','').encode('gbk')

zhuangxiu = ns.split('|')[4].replace(' ','').encode('gbk')

danjia = re.findall("\d+",i.select('div[class="unitPrice"]')[0].string)[0]

zongjia = i.select('div[class="totalPrice"]')[0].get_text().encode('gbk')

out=open("/data/data.csv",'a')

csv_write=csv.writer(out)

data = [region,xiaoqu_name,rem,huxing,pingfang,chaoxiang,zhuangxiu,danjia,zongjia]

csv_write.writerow(data)

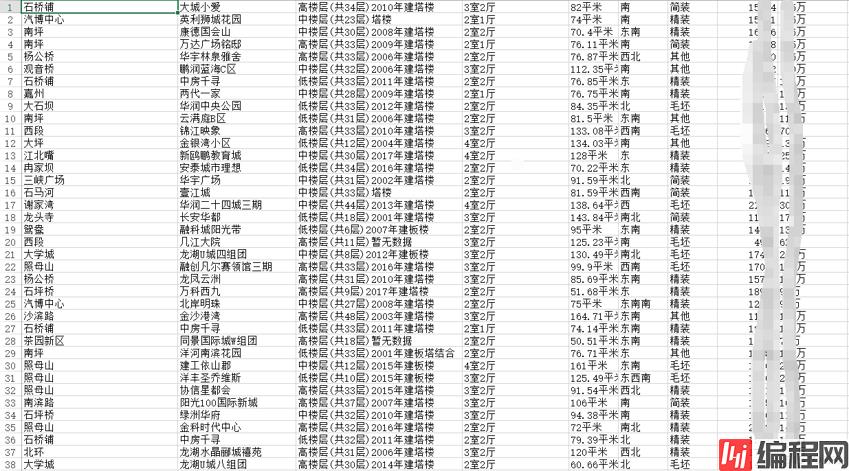

out.close()数据结果

--结束END--

本文标题: Python简单爬虫

本文链接: https://www.lsjlt.com/news/191823.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-03-01

2024-03-01

2024-03-01

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0