Redis 从入门到精通【应用篇】之SpringBoot Redis 多数据源集成支持哨兵模式Cluster集群模式、单机模式 文章目录 Redis 从入门到精通【应用篇】之SpringBoot Redis 多数据源集成支持哨兵模式

大家都知道Redis在6.0版本之前是单线程工作的,这导致在一个项目中有大量读写操作的情况下,Redis单实例的性能被其他业务长时间占据,导致部分业务出现延迟现象,为了解决这个问题,部分公司项目选择使用多个Redis实例分别存储不同的业务数据和使用场景,比如ioT网关写入的数据,可以单独拆分一个Redis实例去使用,其他业务使用一个Redis实例。用多个Redis实例 可以提高Redis的性能。Redis是一种基于内存的缓存数据库,内存容量是其性能的瓶颈。当项目中的数据量较大时,单个Redis实例可能无法承载所有数据,导致性能下降。而使用多个Redis实例可以将数据分散到多个实例中,从而提高Redis的整体性能。

这就导致在某些业务场景下,一个项目工程,同时要使用这两个Redis实例的数据,这就是本文要解决的问题。

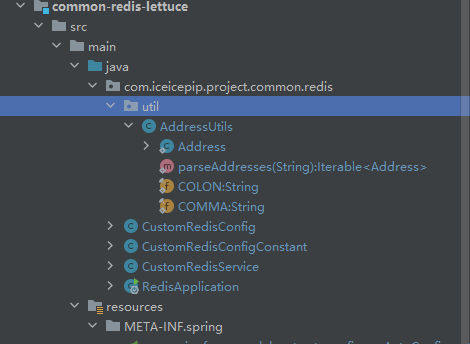

本文通过写一个Redis 多数据源组件 Starter 来解决上面的问题,支持Redis 多数据源,可集成配置哨兵模式、Cluster集群模式、单机模式。如果单实例配置哨兵模式,请参阅我之前的博客 《SpringBoot Redis 使用Lettuce和Jedis配置哨兵模式》

如下,可能有多余的,根据项目具体情况删减。再就是需要使用springboot parent

org.springframework.boot spring-boot-starter-JSON org.springframework.boot spring-boot-configuration-processor true org.apache.commons commons-lang3 com.alibaba transmittable-thread-local org.springframework.boot spring-boot-starter-data-redis org.apache.commons commons-pool2 很关键

# 示例custom.primary.redis.key=user此配置为通用配置所有类型的链接模式都可以配置,不配置走Springboot 默认配置。

spring.redis.xxx.timeout = 3000spring.redis.xxx.maxTotal = 50spring.redis.xxx.maxIdle = 50spring.redis.xxx.minIdle = 2spring.redis.xxx.maxWaitMillis = 10000spring.redis.xxx.testOnBorrow = False# 第1个Redis 实例 用于用户体系,我们取标识为userspring.redis.user.host = 127.0.0.1spring.redis.user.port = 6380spring.redis.user.passWord = 密码spring.redis.user.database = 0 # 第2个Redis 实例用于IoT体系spring.redis.iot.host = 127.0.0.1spring.redis.iot.port = 6390spring.redis.iot.password = 密码spring.redis.iot.database = 0 # 第3个Redis 实例用于xxxspring.redis.xxx.host = 127.0.0.1spring.redis.xxx.port = 6390spring.redis.xxx.password = 密码spring.redis.xxx.database = 0多个Redis数据库实例的情况下,将下面配置项多配置几个。

spring.redis.xxx1.sentinel.master=mymaster1spring.redis.xxx1.sentinel.nodes=ip:端口,ip:端口spring.redis.xxx1.password = bD945aAfeb422E22AbAdFb9D2a22bEDdspring.redis.xxx1.database = 0spring.redis.xxx1.timeout = 3000#第二个spring.redis.xxx2.sentinel.master=mymaster2spring.redis.xxx2.sentinel.nodes=ip:端口,ip:端口spring.redis.xxx2.password = bD945aAfeb422E22AbAdFb9D2a22bEDdspring.redis.xxx2.database = 0spring.redis.xxx2.timeout = 3000spring.redis.xxx1.cluster.nodes=ip1:端口,ip2:端口,ip3:端口,ip4:端口,ip5:端口,ip6:端口spring.redis.xxx1.cluster.max-redirects=5spring.redis.xxx1.password = 密码spring.redis.xxx1.timeout = 3000根据配置文件配置项,创建Redis多个数据源的RedisTemplate 。

主要思想为,

// 定义静态Map变量redis,用于存储Redis配置参数 protected static Map> redis = new HashMap<>(); Configuration private RedisStandaloneConfiguration buildStandaloneConfig(Map param){ //...省略 } private RedisSentinelConfiguration buildSentinelConfig(Map param){ //...省略 } private RedisClusterConfiguration buildClusterConfig(Map param){//...省略 } RedisConnectionFactory public RedisConnectionFactory buildLettuceConnectionFactory(String redisKey, Map param,GenericObjectPoolConfig genericObjectPoolConfig){ ... } 最后遍历上面我们配置的配置文件调用buildCustomRedisService(k, redisTemplate, stringRedisTemplate); 将创建的不同的RedisTemplate Bean 然后注入到Spring容器中

源码中涉及的Springboot 相关知识在此处就不做赘婿,需要了解,可以参考我的《SpringBoot 源码解析系列》

InitializingBean, ApplicationContextAware, BeanPostProcessor

package com.iceicepip.project.common.redis;import com.iceicepip.project.common.redis.util.AddressUtils;import com.fasterxml.jackson.annotation.jsonAutoDetect;import com.fasterxml.jackson.annotation.PropertyAccessor;import com.fasterxml.jackson.databind.ObjectMapper;import org.apache.commons.lang3.StringUtils;import org.apache.commons.pool2.impl.GenericObjectPoolConfig;import org.springframework.beans.MutablePropertyValues;import org.springframework.beans.factory.InitializingBean;import org.springframework.beans.factory.annotation.Value;import org.springframework.beans.factory.config.BeanPostProcessor;import org.springframework.beans.factory.config.ConstructorArgumentValues;import org.springframework.beans.factory.support.DefaultListableBeanFactory;import org.springframework.beans.factory.support.GenericBeanDefinition;import org.springframework.boot.autoconfigure.AutoConfiguration;import org.springframework.boot.autoconfigure.condition.ConditionalOnMissingBean;import org.springframework.boot.context.properties.ConfigurationProperties;import org.springframework.context.ApplicationContext;import org.springframework.context.ApplicationContextAware;import org.springframework.context.annotation.Bean;import org.springframework.core.env.MapPropertySource;import org.springframework.core.env.StandardEnvironment;import org.springframework.data.redis.connection.*;import org.springframework.data.redis.connection.lettuce.LettuceClientConfiguration;import org.springframework.data.redis.connection.lettuce.LettuceConnectionFactory;import org.springframework.data.redis.connection.lettuce.LettucePoolinGClientConfiguration;import org.springframework.data.redis.core.RedisTemplate;import org.springframework.data.redis.core.StringRedisTemplate;import org.springframework.data.redis.serializer.Jackson2JsonRedisSerializer;import org.springframework.data.redis.serializer.StringRedisSerializer;import java.time.Duration;import java.util.*;@AutoConfiguration@ConfigurationProperties(prefix = "spring")public class CustomRedisConfig implements InitializingBean, ApplicationContextAware, BeanPostProcessor { // 定义静态Map变量redis,用于存储Redis配置参数 protected static Map> redis = new HashMap<>(); // 在代码中作为Redis的主数据源的标识 @Value("${customer.primary.redis.key}") private String primaryKey; @Override // 实现InitializingBean接口的方法,用于在属性被注入后初始化Redis连接工厂和Redis模板 public void afterPropertiesSet() { redis.forEach((k, v) -> { // 如果当前的Redis主键等于注入的主键,则将Redis配置参数加入到属性源中 if(Objects.equals(k,primaryKey)){ Map paramMap = new HashMap<>(4); v.forEach((k1,v1)-> paramMap.put("spring.redis."+k1,v1)); MapPropertySource mapPropertySource = new MapPropertySource("redisAutoConfigProperty", paramMap); ((StandardEnvironment)applicationContext.getEnvironment()).getPropertySources().addLast(mapPropertySource); } // 创建Redis连接池配置对象和连接工厂对象 GenericObjectPoolConfig genericObjectPoolConfig = buildGenericObjectPoolConfig(k, v); RedisConnectionFactory lettuceConnectionFactory = buildLettuceConnectionFactory(k, v, genericObjectPoolConfig); // 创建Redis模板对象和字符串模板对象,并调用方法创建自定义Redis服务对象 RedisTemplate redisTemplate = buildRedisTemplate(k, lettuceConnectionFactory); StringRedisTemplate stringRedisTemplate = buildStringRedisTemplate(k, lettuceConnectionFactory); buildCustomRedisService(k, redisTemplate, stringRedisTemplate); }); } // 创建Redis主数据源 RedisTemplate @Bean public RedisTemplate redisTemplate() { Map redisParam = redis.get(primaryKey); GenericObjectPoolConfig genericObjectPoolConfig = buildGenericObjectPoolConfig(primaryKey, redisParam); RedisConnectionFactory lettuceConnectionFactory = buildLettuceConnectionFactory(primaryKey, redisParam, genericObjectPoolConfig); RedisTemplate template = new RedisTemplate(); template.setConnectionFactory(lettuceConnectionFactory); return template; } // 创建Redis主数据源 StringRedisTemplate @Bean @ConditionalOnMissingBean public StringRedisTemplate stringRedisTemplate() { Map redisParam = redis.get(primaryKey); GenericObjectPoolConfig genericObjectPoolConfig = buildGenericObjectPoolConfig(primaryKey, redisParam); RedisConnectionFactory lettuceConnectionFactory = buildLettuceConnectionFactory(primaryKey, redisParam, genericObjectPoolConfig); StringRedisTemplate template = new StringRedisTemplate(); template.setConnectionFactory(lettuceConnectionFactory); return template; } // 创建自定义Redis服务对象 private void buildCustomRedisService(String k, RedisTemplate redisTemplate, StringRedisTemplate stringRedisTemplate) { ConstructorArgumentValues constructorArgumentValues = new ConstructorArgumentValues(); constructorArgumentValues.addIndexedArgumentValue(0, stringRedisTemplate); constructorArgumentValues.addIndexedArgumentValue(1, redisTemplate); // 将来使用的时候Redis对象的beanName,区分多个数据源 setCosBean(k + "Redis", CustomRedisService.class, constructorArgumentValues); } // 创建StringRedisTemplate private StringRedisTemplate buildStringRedisTemplate(String k, RedisConnectionFactory lettuceConnectionFactory) { ConstructorArgumentValues constructorArgumentValues = new ConstructorArgumentValues(); constructorArgumentValues.addIndexedArgumentValue(0, lettuceConnectionFactory); setCosBean(k + "StringRedisTemplate", StringRedisTemplate.class, constructorArgumentValues); return getBean(k + "StringRedisTemplate"); } // 创建Redis模板对象 private RedisTemplate buildRedisTemplate(String k, RedisConnectionFactory lettuceConnectionFactory) { // 如果已经存在Redis模板对象,则直接返回该对象 if(applicationContext.containsBean(k + "RedisTemplate")){ return getBean(k + "RedisTemplate"); } // 创建Redis序列化器对象 Jackson2JsonRedisSerializer serializer = new Jackson2JsonRedisSerializer(Object.class); ObjectMapper mapper = new ObjectMapper(); mapper.setVisibility(PropertyAccessor.ALL, JsonAutoDetect.Visibility.ANY); mapper.enableDefaultTyping(ObjectMapper.DefaultTyping.NON_FINAL); serializer.setObjectMapper(mapper); // 创建Redis模板对象,并设置连接池配置和序列化器等属性 Map original = new HashMap<>(2); original.put("connectionFactory", lettuceConnectionFactory); original.put("valueSerializer", serializer); original.put("keySerializer", new StringRedisSerializer()); original.put("hashKeySerializer", new StringRedisSerializer()); original.put("hashValueSerializer", serializer); // 将来使用RedisTemplate的地方只需要用注解制定beanName 即可获取到每个Redis实例的操作工具类 setBean(k + "RedisTemplate", RedisTemplate.class, original); return getBean(k + "RedisTemplate"); }} public GenericObjectPoolConfig buildGenericObjectPoolConfig(String redisKey, Map param) { if(applicationContext.containsBean(redisKey + "GenericObjectPoolConfig")){ return getBean(redisKey + "GenericObjectPoolConfig"); } Integer maxIdle = StringUtils.isEmpty(param.get(CustomRedisConfigConstant.REDIS_MAXIDLE)) ? GenericObjectPoolConfig.DEFAULT_MAX_IDLE : Integer.valueOf(param.get(CustomRedisConfigConstant.REDIS_MAXIDLE)); Integer minIdle = StringUtils.isEmpty(param.get(CustomRedisConfigConstant.REDIS_MINIDLE)) ? GenericObjectPoolConfig.DEFAULT_MIN_IDLE : Integer.valueOf(param.get(CustomRedisConfigConstant.REDIS_MINIDLE)); Integer maxTotal = StringUtils.isEmpty(param.get(CustomRedisConfigConstant.REDIS_MAXTOTAL)) ? GenericObjectPoolConfig.DEFAULT_MAX_TOTAL : Integer.valueOf(param.get(CustomRedisConfigConstant.REDIS_MAXTOTAL)); Long maxWaitMillis = StringUtils.isEmpty(param.get(CustomRedisConfigConstant.REDIS_MAXWAITMILLIS)) ? -1L:Long.valueOf(param.get(CustomRedisConfigConstant.REDIS_MAXWAITMILLIS)); Boolean testOnBorrow = StringUtils.isEmpty(param.get(CustomRedisConfigConstant.REDIS_TESTONBORROW)) ? Boolean.FALSE :Boolean.valueOf(param.get(CustomRedisConfigConstant.REDIS_TESTONBORROW)); Map original = new HashMap<>(8); original.put("maxTotal", maxTotal); original.put("maxIdle", maxIdle); original.put("minIdle", minIdle); original.put("maxWaitMillis",maxWaitMillis); original.put("testOnBorrow",testOnBorrow); setBean(redisKey + "GenericObjectPoolConfig", GenericObjectPoolConfig.class, original); return getBean(redisKey + "GenericObjectPoolConfig"); } public RedisConnectionFactory buildLettuceConnectionFactory(String redisKey, Map param,GenericObjectPoolConfig genericObjectPoolConfig){ if(applicationContext.containsBean(redisKey + "redisConnectionFactory")){ return getBean(redisKey + "redisConnectionFactory"); } String timeout = StringUtils.defaultIfEmpty(param.get(CustomRedisConfigConstant.REDIS_TIMEOUT), "3000"); LettuceClientConfiguration clientConfig = LettucePoolingClientConfiguration.builder() .commandTimeout(Duration.ofMillis(Long.valueOf(timeout))) .poolConfig(genericObjectPoolConfig) .build(); Object firstArgument = null; if(this.isCluster(param)){ RedisClusterConfiguration clusterConfiguration = buildClusterConfig(param); firstArgument = clusterConfiguration; } else if(this.isSentinel(param)){ RedisSentinelConfiguration sentinelConfiguration = buildSentinelConfig(param); firstArgument = sentinelConfiguration; } else{ RedisStandaloneConfiguration standaloneConfiguration = buildStandaloneConfig(param); firstArgument = standaloneConfiguration; } ConstructorArgumentValues constructorArgumentValues = new ConstructorArgumentValues(); constructorArgumentValues.addIndexedArgumentValue(0, firstArgument); constructorArgumentValues.addIndexedArgumentValue(1, clientConfig); setCosBean(redisKey + "redisConnectionFactory", LettuceConnectionFactory.class, constructorArgumentValues); return getBean(redisKey +"redisConnectionFactory"); } private boolean isSentinel(Map param){ String sentinelMaster = param.get(CustomRedisConfigConstant.REDIS_SENTINEL_MASTER); String sentinelNodes = param.get(CustomRedisConfigConstant.REDIS_SENTINEL_NODES); return StringUtils.isNotEmpty(sentinelMaster) && StringUtils.isNotEmpty(sentinelNodes); } private boolean isCluster(Map param){ String clusterNodes = param.get(CustomRedisConfigConstant.REDIS_CLUSTER_NODES); return StringUtils.isNotEmpty(clusterNodes); } private RedisStandaloneConfiguration buildStandaloneConfig(Map param){ String host = param.get(CustomRedisConfigConstant.REDIS_HOST); String port = param.get(CustomRedisConfigConstant.REDIS_PORT); String database = param.get(CustomRedisConfigConstant.REDIS_DATABASE); String password = param.get(CustomRedisConfigConstant.REDIS_PASSWORD); RedisStandaloneConfiguration standaloneConfig = new RedisStandaloneConfiguration(); standaloneConfig.setHostName(host); standaloneConfig.setDatabase(Integer.valueOf(database)); standaloneConfig.setPort(Integer.valueOf(port)); standaloneConfig.setPassword(RedisPassword.of(password)); return standaloneConfig; } private RedisSentinelConfiguration buildSentinelConfig(Map param){ String sentinelMaster = param.get(CustomRedisConfigConstant.REDIS_SENTINEL_MASTER); String sentinelNodes = param.get(CustomRedisConfigConstant.REDIS_SENTINEL_NODES); String database = param.get(CustomRedisConfigConstant.REDIS_DATABASE); String password = param.get(CustomRedisConfigConstant.REDIS_PASSWORD); RedisSentinelConfiguration config = new RedisSentinelConfiguration(); config.setMaster(sentinelMaster); Iterable addressIterable = AddressUtils.parseAddresses(sentinelNodes); Iterable redisNodes = transfORM(addressIterable); config.setDatabase(Integer.valueOf(database)); config.setPassword(RedisPassword.of(password)); config.setSentinels(redisNodes); return config; } private RedisClusterConfiguration buildClusterConfig(Map param){ String clusterNodes = param.get(CustomRedisConfigConstant.REDIS_CLUSTER_NODES); String clusterMaxRedirects = param.get(CustomRedisConfigConstant.REDIS_CLUSTER_MAX_REDIRECTS); String password = param.get(CustomRedisConfigConstant.REDIS_PASSWORD); RedisClusterConfiguration config = new RedisClusterConfiguration(); Iterable addressIterable = AddressUtils.parseAddresses(clusterNodes); Iterable redisNodes = transform(addressIterable); config.setClusterNodes(redisNodes); config.setMaxRedirects(StringUtils.isEmpty(clusterMaxRedirects) ? 5 : Integer.valueOf(clusterMaxRedirects)); config.setPassword(RedisPassword.of(password)); return config; } private Iterable transform(Iterable addresses){ List redisNodes = new ArrayList<>(); addresses.forEach( address -> redisNodes.add(new RedisServer(address.getHost(), address.getPort()))); return redisNodes; } private static ApplicationContext applicationContext; public Map> getRedis() { return redis; } @Override public void setApplicationContext(ApplicationContext applicationContext) { CustomRedisConfig.applicationContext = applicationContext; } private static void checkApplicationContext() { if (applicationContext == null) { throw new IllegalStateException("applicaitonContext未注入,请在applicationContext.xml中定义SpringContextUtil"); } } @SuppressWarnings("unchecked") public static T getBean(String name) { checkApplicationContext(); if (applicationContext.containsBean(name)) { return (T) applicationContext.getBean(name); } return null; } public static void removeBean(String beanName) { DefaultListableBeanFactory acf = (DefaultListableBeanFactory) applicationContext.getAutowireCapableBeanFactory(); acf.removeBeanDefinition(beanName); } public synchronized void setBean(String beanName, Class clazz, Map original) { checkApplicationContext(); DefaultListableBeanFactory beanFactory = (DefaultListableBeanFactory) applicationContext.getAutowireCapableBeanFactory(); if (beanFactory.containsBean(beanName)) { return; } GenericBeanDefinition definition = new GenericBeanDefinition(); //类class definition.setBeanClass(clazz); if(beanName.startsWith(primaryKey)){ definition.setPrimary(true); } //属性赋值 definition.setPropertyValues(new MutablePropertyValues(original)); //注册到spring上下文 beanFactory.reGISterBeanDefinition(beanName, definition); } public synchronized void setCosBean(String beanName, Class clazz, ConstructorArgumentValues original) { checkApplicationContext(); DefaultListableBeanFactory beanFactory = (DefaultListableBeanFactory) applicationContext.getAutowireCapableBeanFactory(); //这里重要 if (beanFactory.containsBean(beanName)) { return; } GenericBeanDefinition definition = new GenericBeanDefinition(); //类class definition.setBeanClass(clazz); if(beanName.startsWith(primaryKey)){ definition.setPrimary(true); } //属性赋值 definition.setConstructorArgumentValues(new ConstructorArgumentValues(original)); //注册到spring上下文 beanFactory.registerBeanDefinition(beanName, definition); }} 定义常用配置项的键名

package com.iceicepip.project.common.redis;public class CustomRedisConfigConstant { private CustomRedisConfigConstant() { } public static final String REDIS_HOST = "host"; public static final String REDIS_PORT = "port"; public static final String REDIS_TIMEOUT = "timeout"; public static final String REDIS_DATABASE = "database"; public static final String REDIS_PASSWORD = "password"; public static final String REDIS_MAXWAITMILLIS = "maxWaitMillis"; public static final String REDIS_MAXIDLE = "maxIdle"; public static final String REDIS_MINIDLE = "minIdle"; public static final String REDIS_MAXTOTAL = "maxTotal"; public static final String REDIS_TESTONBORROW = "testOnBorrow"; public static final String REDIS_SENTINEL_MASTER = "sentinel.master"; public static final String REDIS_SENTINEL_NODES = "sentinel.nodes"; public static final String REDIS_CLUSTER_NODES = "cluster.nodes"; public static final String REDIS_CLUSTER_MAX_REDIRECTS = "cluster.max-redirects"; public static final String BEAN_NAME_SUFFIX = "Redis"; public static final String INIT_METHOD_NAME = "getInit";}package com.iceicepip.project.common.redis;import com.alibaba.ttl.TransmittableThreadLocal;import org.apache.commons.lang3.StringUtils;import org.slf4j.Logger;import org.slf4j.LoggerFactory;import org.springframework.aop.framework.Advised;import org.springframework.aop.support.AopUtils;import org.springframework.beans.factory.NoSuchBeanDefinitionException;import org.springframework.beans.factory.annotation.Value;import org.springframework.context.ApplicationContext;import org.springframework.dao.DataAccessException;import org.springframework.data.redis.connection.RedisConnection;import org.springframework.data.redis.connection.RedisStringCommands;import org.springframework.data.redis.connection.ReturnType;import org.springframework.data.redis.connection.StringRedisConnection;import org.springframework.data.redis.core.*;import org.springframework.data.redis.core.types.Expiration;import javax.annotation.Resource;import java.NIO.charset.StandardCharsets;import java.util.*;import java.util.concurrent.TimeUnit;import java.util.function.Function;import java.util.function.Supplier;public class CustomRedisService { private static final Logger logger = LoggerFactory.getLogger(CustomRedisService.class); private StringRedisTemplate stringRedisTemplate; private RedisTemplate redisTemplate; @Value("${distribute.lock.MaxSeconds:100}") private Integer lockMaxSeconds; private static Long LOCK_WAIT_MAX_TIME = 120000L; @Resource private ApplicationContext applicationContext; private TransmittableThreadLocal redisLockReentrant = new TransmittableThreadLocal<>(); private static final String RELEASE_LOCK_SCRIPT = "if redis.call('get', KEYS[1]) == ARGV[1] then return redis.call('del', KEYS[1]) else return 0 end"; private static final String REDIS_LOCK_KEY_PREFIX = "xxx:redisLock"; private static final String REDIS_NAMESPACE_PREFIX = ":"; @Value("${spring.application.name}") private String appName; public CustomRedisService() { } public CustomRedisService(StringRedisTemplate stringRedisTemplate, RedisTemplate redisTemplate) { this.stringRedisTemplate = stringRedisTemplate; this.redisTemplate = redisTemplate; } public StringRedisTemplate getStringRedisTemplate() { return stringRedisTemplate; } public RedisTemplate getRedisTemplate() { return redisTemplate; } //以下是操作 public void saveOrUpdate(HashMap values) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); valueOps.multiSet(values); } public void saveOrUpdate(String key, String value) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); valueOps.set(key, value); } public String getValue(String key) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); return valueOps.get(key); } public void setValue(String key, String value) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); valueOps.set(key, value); } public void setValue(String key, String value, long timeout, TimeUnit unit) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); valueOps.set(key, value, timeout, unit); } public List getValues(Collection keys) throws Exception { ValueOperations valueOps = stringRedisTemplate .opsForValue(); return valueOps.multiGet(keys); } public void delete(String key) throws Exception { stringRedisTemplate.delete(key); } public void delete(Collection keys) throws Exception { stringRedisTemplate.delete(keys); } public void addSetValues(String key, String... values) throws Exception { SetOperations setOps = stringRedisTemplate.opsForSet(); setOps.add(key, values); } public Set getSetValues(String key) throws Exception { SetOperations setOps = stringRedisTemplate.opsForSet(); return setOps.members(key); } public String getSetRandomMember(String key) throws Exception { SetOperations setOps = stringRedisTemplate.opsForSet(); return setOps.randomMember(key); } public void delSetValues(String key, Object... values) throws Exception { SetOperations setOps = stringRedisTemplate.opsForSet(); setOps.remove(key, values); } public Long getZsetValuesCount(String key) throws Exception { return stringRedisTemplate.opsForSet().size(key); } public void addHashSet(String key, HashMap args) throws Exception { HashOperations hashsetOps = stringRedisTemplate .opsForHash(); hashsetOps.putAll(key, args); } public Map getHashSet(String key) throws Exception { HashOperations hashsetOps = stringRedisTemplate .opsForHash(); return hashsetOps.entries(key); } public Map getHashByteSet(String key) throws Exception { RedisConnection connection = null; try { connection = redisTemplate.getConnectionFactory().getConnection(); return connection.hGetAll(key.getBytes()); } catch (Exception e) { throw new Exception(e); } finally { if (Objects.nonNull(connection) && !connection.isClosed()) { connection.close(); } } } public List getHashMSet(byte[] key, byte[][] fields) throws Exception { return stringRedisTemplate.getConnectionFactory().getConnection().hMGet(key, fields); } public Boolean setHashMSet(byte[] key, byte[] field, byte[] vaule) throws Exception { return stringRedisTemplate.getConnectionFactory().getConnection().hSet(key, field, vaule); } public List

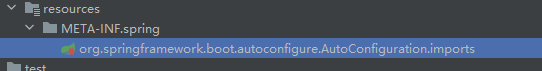

在工程目录中创建 common-redis-lettuce/src/main/resources/META-INF/spring创建文件名为

org.springframework.boot.autoconfigure.AutoConfiguration.imports 文件

文件内容:

com.iceicepip.project.common.redis.CustomRedisConfig在工程目录中创建 common-redis-lettuce/src/main/resources/META-INF/spring 目录,并在该目录下创建一个名为 org.springframework.boot.autoconfigure.AutoConfiguration.imports 的文件。该文件的作用是指示 Spring Boot 在自动配置期间需要导入哪些额外的配置类。

在 org.springframework.boot.autoconfigure.AutoConfiguration.imports 文件中,可以添加需要导入的其他配置类的全限定类名。例如,如果我们需要在自动配置期间导入一个名为 CustomRedisConfig 的配置类,可以在该文件中添加以下内容:

com.iceicepip.project.common.redis.CustomRedisConfig这样,在应用程序启动时,Spring Boot 会自动加载 CustomRedisConfig 类,并将其与自动配置合并,以提供完整的应用程序配置。

其中xxx 为在Spring Boot 配置文件中配置的多数据源的标识.如’user’、“iot”

@Autowired @Qualifier("xxxRedis") private CustomRedisService xxxRedisService; @Autowired @Qualifier("userRedis") private CustomRedisService userRedisService;或者直接使用RedisTemplate 。

@Autowired @Qualifier("userRedisTemplate") private RedisTemplate userRedisTemplate; @Autowired @Qualifier("xxxStringRedisTemplate") private StringRedisTemplate xxxStringRedisTemplate; @Autowired @Qualifier("xxxRedisTemplate") private RedisTemplate xxxRedisTemplate;https://github.com/wangshuai67/Redis-Tutorial-2023

大家好,我是冰点,今天的Redis【实践篇】之SpringBoot Redis 多数据源集成支持哨兵模式和Cluster集群模式,全部内容就是这些。如果你有疑问或见解可以在评论区留言。

大家好,我是冰点,今天的Redis【实践篇】之SpringBoot Redis 多数据源集成支持哨兵模式和Cluster集群模式,全部内容就是这些。如果你有疑问或见解可以在评论区留言。来源地址:https://blog.csdn.net/wangshuai6707/article/details/131924586

--结束END--

本文标题: SpringBoot Redis 多数据源集成支持哨兵模式和Cluster集群模式

本文链接: https://www.lsjlt.com/news/372556.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

下载Word文档到电脑,方便收藏和打印~

2024-04-03

2024-04-03

2024-04-01

2024-01-21

2024-01-21

2024-01-21

2024-01-21

2023-12-23

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0